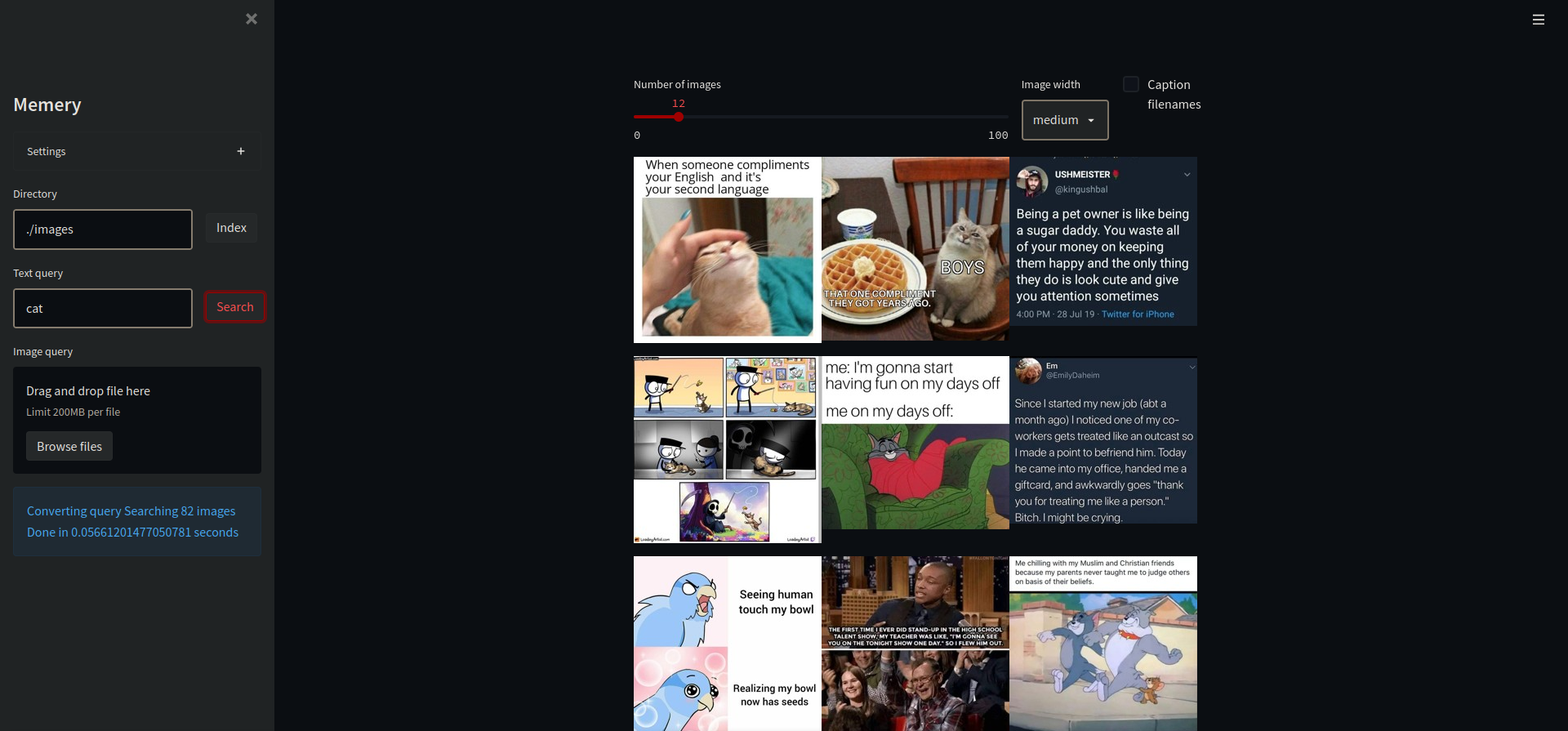

CLIP came out in January 2021. By March I had a working tool. Type “astronaut on a horse,” get astronauts on horses. Type “that tweet about consciousness,” get the screenshot. No tags, no filenames. Just describe the thing.

Three interfaces: browser GUI, command line, Python library.

from memery.core import queryFlow

results = queryFlow('./memes/', 'dad joke')

570 stars on GitHub. No Product Hunt launch, no Hacker News post. It just spread.

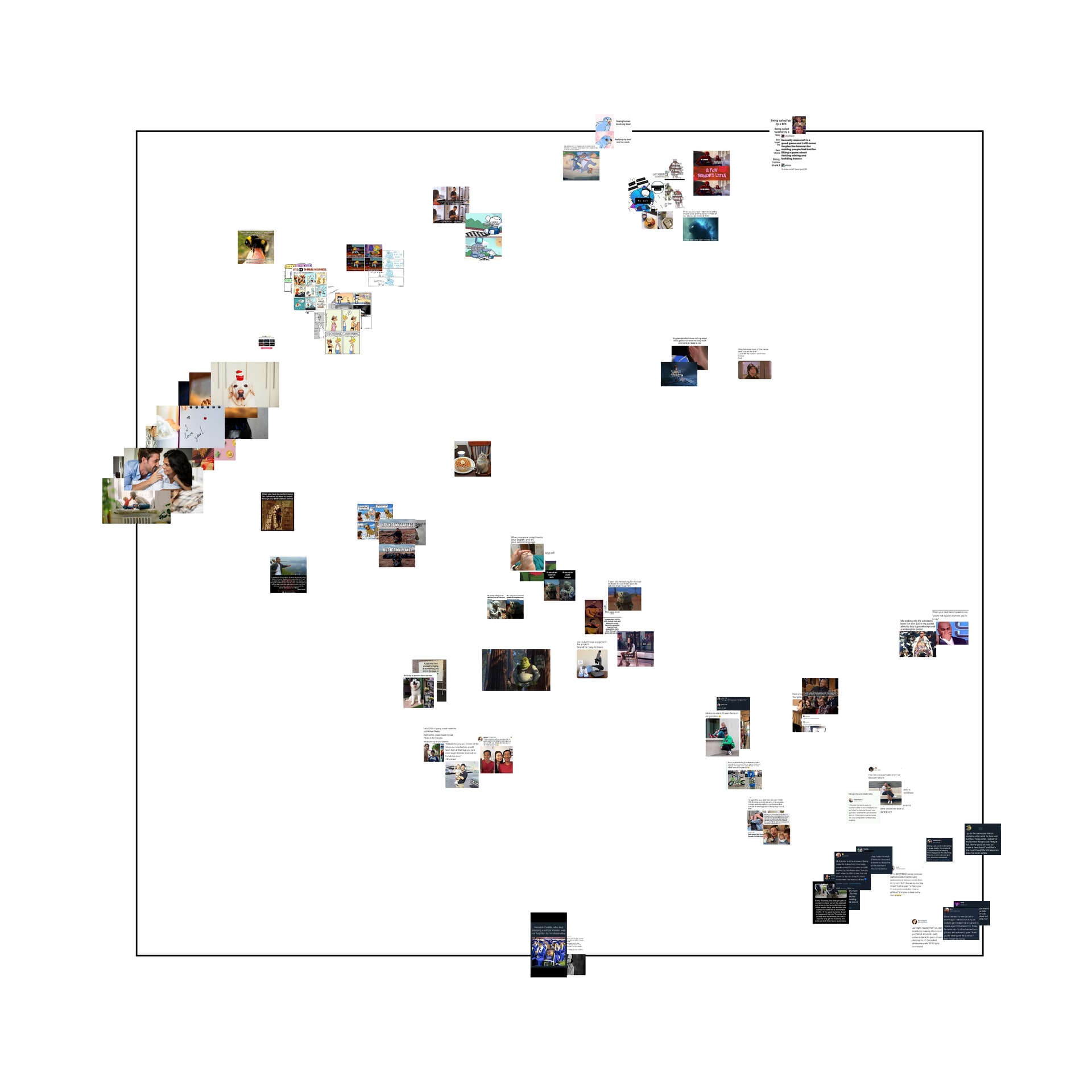

Building Memery and watching CLIP navigate semantic space made something click. Two months later I wrote Unified Meme Theory — memes are pictures-plus-words because they represent gradients in semantic space, and CLIP proved it by learning to traverse that space without being told to.

Then Apple and Google put CLIP embeddings into their photo apps.