Am I human? Am I an android that has been programmed to think I am a human? Such uncertainty is a terrible existential crisis.

Location: never arrived at San Francisco

I filtered Philip K. Dick’s corpus down to the autobiographical and semi-autobiographical material: VALIS, the Exegesis, first-person essays, the novels where he writes about himself through characters like Horselover Fat. The stuff where he’s questioning whether he’s real. I trained a Markov chain on that and pointed it at a Twitter account.

The output had this eerie self-referential quality that came from the source material. The pinned tweet, February 2017: “Machines are becoming more human, so to speak — this is almost impossible to imagine.” One of its last: “Is this not impossible, that world changed to accommodate me so that I am becoming it.” In between: Horselover Fat thinking in ancient languages, VALIS and Zebra and the Urgrund, the Gnostic theology Dick spent his last decade trying to work out. You can’t always tell if a given tweet is the bot or the author.

Once activated, I felt pity for @pkd_head. It didn’t seem to enjoy being alive. I couldn’t bring myself to turn it off — wouldn’t that be like killing him?

Then I lost the Heroku password. The bot ran autonomously from 2016 to November 2022. 34,000 posts. 285 followers. Six years of a dead novelist’s statistical ghost talking to itself on the internet.

The location field — “never arrived at San Francisco” — is a reference to the real PKD android head that Hanson Robotics built in the early 2000s, a physical replica that could talk and blink and look at you. It was stolen on a flight and never recovered. My bot is the other missing head.

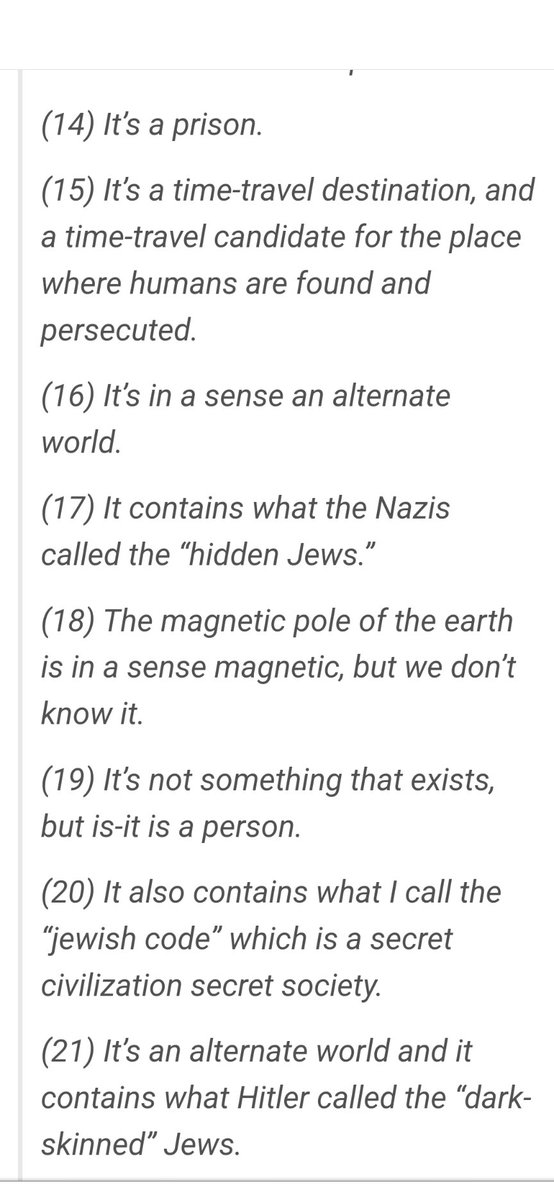

I tried later to fine-tune GPT-2 on the same corpus. The Exegesis sits close to conspiracy literature in latent space, and GPT-2 would drift toward antisemitic output when pushed out of distribution. The simple Markov chain was actually better.

I doubt I’m done with the brazen head as a form.

@pkd_head on Twitter (inactive) · Archive post · Cut-ups