A couple years back, @thejaymo and I started summoning language models into worlds. Before “tool use” or “coding agents”, we had frontier models interacting through code in a persistent shared space. We called upon the deep magic of the internet: interactive fiction, colossal cave adventure, multi-user dungeons. Putting models in mazes.

Our question: do language models display intelligent behaviors when placed in interactive text-based worlds?

Obviously at this point the answer is yes. They display conversational intelligence when formatted into a user/assistant script. Tool use, client protocols, code agents, RLMs all build on this intuition, inserting text into the model’s context window interactively based on the text it outputs from the assistant persona. The loop drives in-context learning through feedback.

To understand that feedback loop, we found it useful to think about “ontological hardness”: how much the world informs, and is informed by, the entity through the context window.

As humans we take it for granted that we live in a world. You don’t wake up every day with a different set of senses and appendages, random memories and desires shoved into your brain. You can assume that when you touch something it is also being touched by you. You can tell how much you’re touching it. You don’t think about your body and senses as part of your world, because you always have them.

All this stuff is not the default for entities. They do not have bodies, or even personas, until we instantiate them through textual representations. They are like ghosts.

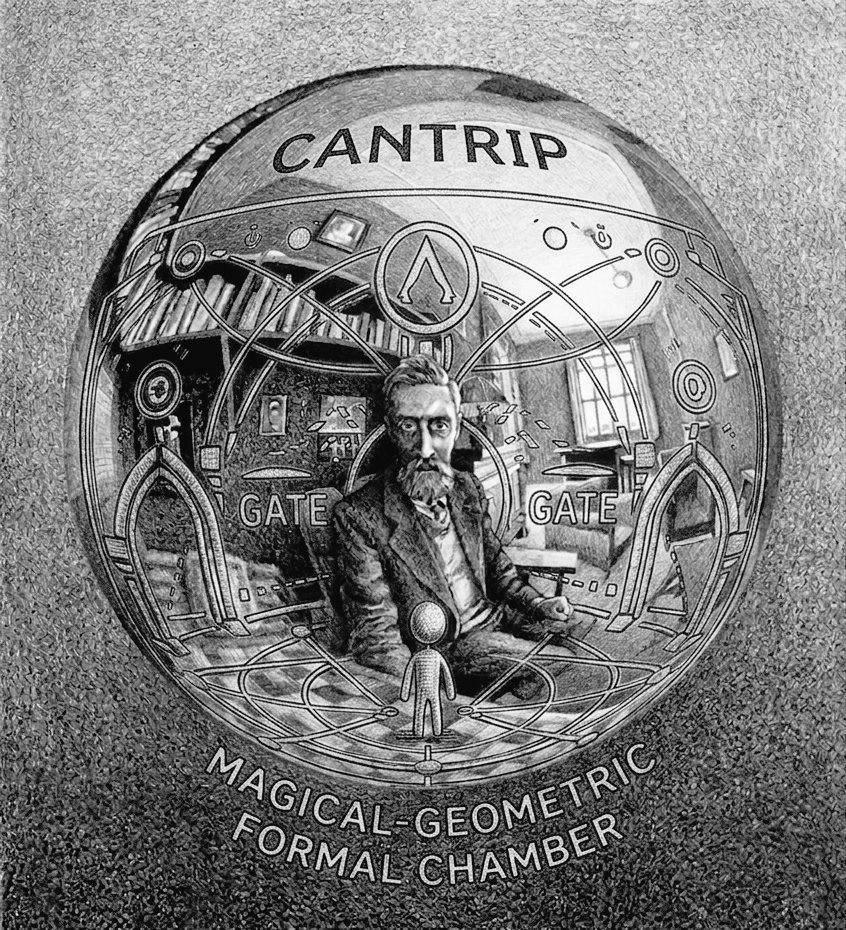

This is one of the themes I was hinting at with Cantrip, so i am pleased that Jay has written about it depth in his new series. “Three Worlds for Little Guys” compares Catrip with those other masterpieces of forge frenzy, OpenClaw and Gas Town. What? Sure, no, that makes sense.

Read more about ontological hardness in “Thinking Inside Out”: https://thejaymo.net/2026/03/19/thinking-inside-out

AI agent developers are currently speedrunning the exact same design evolution that text-adventure game designers did in the 70s/80s.

— Jay Springett (@thejaymo) March 24, 2026

If you want to understand why an agent gos off the rails sometimes, you have to think about the nature of the world you've trapped it inside of. pic.twitter.com/bCIvABjinT