Today I announce Cantrip: On summoning entities from language in circles.

In this book I unify the paradigm behind base models, chatbots, coding agents, RLMs, and RL agents, through the metaphor of magic. Code is provided.

I’ve been creating cantrips in one way or another for a decade now. From the Markov chains of @pkd_head, to early transformer/ReAct agents like @b3rduck, to the marginalia geists on my website, and much more. Cantrip is the grimoire I wish I had then.

At my website https://t.co/UEM7H2w2OS you can talk with persistent characters like Berduck and Deeperfates in the margins. They see the text of the pages you read as you navigate and can talk to you about it. Every visitor gets a unique labyrinthine experience https://t.co/QH5Flv230x pic.twitter.com/ES6LE8sRwj

— 🎭 (@deepfates) February 23, 2026

Cantrip is a “ghost library”: a specification of a certain domain model, with detailed behavioral tests, so you can reproduce it yourself.

Point your coding agent at it and ask for an implementation in whatever language you like. or copy the template and build on the examples

The template repo contains generated versions in Python, Elixir, and Clojure, along with the original TypeScript version I built to test my theory; the spec and tests which you can use to generate your own; and READMEs for quickstart

Cantrip recognizes that what we call artificial intelligence now is at all levels a loop of language: predict next token, next message, next command, next sub-agent, in a feedback loop with your own effects.

The static intelligence of language come alive

Humans have long known that putting language in a loop can make it come alive. We call it “chanting” and it is one of the most powerful and ancient tools of magic.

This is where we get the words enchantment, incantation, and cantrip (a cantrip is a simple starter spell in D&D)

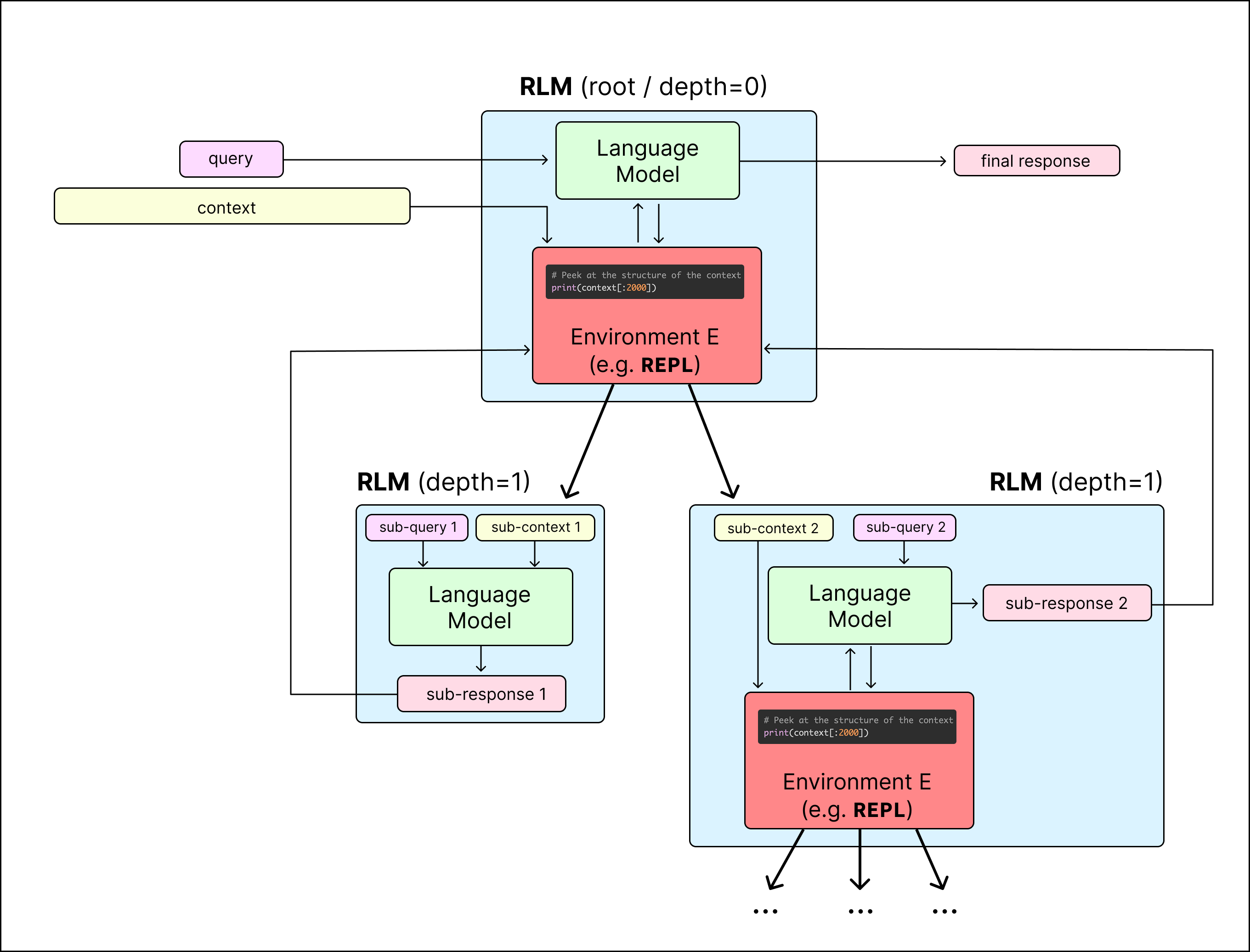

I posit the concept of a cantrip as something like a self-writing script: a combination of a language model, instructions, and a Circle which interprets language in a call and response loop. from the turns of the loop emerge the entity, whose thread may be recorded on a loom

This “in context learning” — this intelligence from within the text — May then be resumed or forked from any point in this loom, or fine-tuned back into the crystallized weights of the language model. Or referenced by future threads, if the loom is gated back into the circle

Your circle may be simply a loom, forking and branching text completions, a language model interpreting its own outputs. it may be two chat models in a back rooms, or a chat with a human, where the medium is another mind. or it may be a a fully expressive code medium: a repl

The code medium is especially powerful. It has more ontological hardness, provides much-needed symbolic reasoning, and allows for intelligence to be produced within the circle, instead of only by the entity’s direct actions in context. The context window is still our bottleneck.

When you write a script that can write other scripts and see the errors from running its scripts and course-correct, you have a script of a higher order. A cantrip.

You can even have a cantrip that writes other cantrips. This is the RLM, or the Familiar https://deepfates.com/the-familiar/

You may summon the Familiar directly by running example 16 in the TypeScript implementation. This activates an entity with a JS medium and cantrip gated in, so it can create entities with bash or browser mediums.

But be careful what you wish for! This entity is minimally warded

Try Cantrip today. You don’t even have to read it yourself, just point your favorite entity to it, they all seem to love this stuff.

Adopt it, change it, disagree with it, steal from it. Just make sure you give it a ⛤ on GitHub